As-a-Service Cybercrime Models

As-a-Service cybercrime models reduce the barrier to entry for cyber criminals as they no longer need expertise in every domain. Threat actors can increasingly outsource or supplement missing skills through the broader cybercrime-as-a-service ecosystem, and thus these models continue to grow in popularity within the cybercriminal underground. This has led to multiple templates in this sphere, such as Phishing-as-a-Service, Botnet-as-a-Service, DDoS-as-a-Service, and notably Ransomware-as-a-Service (RaaS) [1].

What is Extortion-as-a-Service?

Extortion-as-a-Service (EaaS) businesses function as a formalized way for cyber threat actors to offer extortion services to others for a fee or profit share and represents an evolution of extortion operations from the double-extortion ransomware model. Advancing from the RaaS model, extortion has become a distinct profit stream, separate from the encryption payload. This separation of functions, data theft, negotiation, and publicity, sets the stage for EaaS [1].

The EaaS model reflects a broader trend in cybercriminal activity, in which threat actors increasingly prioritize data theft and public exposure over traditional ransomware encryption. This shift reduces operational complexity while increasing pressure on victims through reputational damage. This approach has become increasingly popular among threat actors as, unlike encryption-based attacks, these operations are more difficult to detect and remediate [2]. It reflects a trend of ‘hack-and-leak’ operations that prioritize stealth, speed, and reputational damage over traditional encryption-based ransomware attacks [3].

World Leaks Overview

World Leaks emerged in early 2024 as a direct rebrand of the Hunters International ransomware group, which was notorious for encrypting victims’ data and demanding payment for decryption keys. In mid-2025, Hunters International shifted to an extortion-only model due to law enforcement scrutiny and reduced profitability, rebranding itself as World Leaks.

World Leaks functions as an affiliate-based EaaS operation which provides proprietary Storage Software exfiltration tooling to affiliates while maintaining a four-platform infrastructure consisting of a main data leak site hosted on the Dark Web where victim data is published, a victim negotiation portal with live chat, an affiliate management panel, and an insider journalist platform granting media outlets 24-hour advance access to stolen data before public release [4]. Since its emergence, World Leaks has published data stolen from dozens of organizations globally on its data leak site, serving both as a pressure tactic and a means for building reputation among cyber criminals.

World Leaks (known associations include Hive Ransomware, Secp0 Ransomware, and UNC6148) have been known to target the industrial (manufacturing) sector, along with healthcare organizations, technology firms and more generally, industries with valuable intellectual property [4]. Victims targeted have spanned multiple countries, with most located in the US, as well as Canada and several countries across Europe [5].

World Leaks’ Tactics, Techniques, and Procedures (TTPs) [3][4]

World Leaks’ typical attack pattern involves the exploitation of credentials with inadequate access controls, e.g. lacking multi-factor authentication (MFA), moving through reconnaissance, lateral movement and data exfiltration, notably without an encryption element.

Initial Access:

Initial access is typically gained through the exploitation of compromised virtual private network (VPN) credentials lacking MFA through valid accounts, as well as phishing campaigns. The targeting of internet-facing VPN infrastructure, RDP, and public-facing applications also represent common attack vectors in World Leaks incidents.

Lateral Movement:

SMB, RDP, and SSH are used for lateral movement via remote services. Notably, the group is also known to use PsExec and Rclone as part of their lateral movement activities.

Persistence:

Registry key modifications, scheduled tasks creation, account manipulation.

Exfiltration:

Data exfiltration is carried out through custom storage software tooling via TOR connections. Cloud storage services used for exfiltration particularly include MEGA. World Leaks also carry out direct data transfer through established command-and-control (C2) infrastructure.

Unlike Hunters International, which combined encryption with extortion, World Leaks claims to have abandoned the use of encryption. Some reports note that operations since January 2025 represent a pivot toward eliminating encryption entirely, instead relying on custom exfiltration tooling with SOCKSv5 proxy and TOR-based communications [4]. However, in early 2026, Darktrace detected an incident that directly contradicted this claim: World Leaks carried out an attack that involved both the exfiltration and encryption of customer data.

Darktrace’s Coverage of World Leaks Ransomware

Organizations today face a growing challenge: keeping pace with increasingly fast-moving threats. This incident highlights a common problem, when time-limited mitigations expire or human security teams cannot respond quickly enough, attackers are often able to regain the upper hand. A recent Darktrace detection of World Leaks ransomware provides a clear example of this challenge in practice.

In January 2026, Darktrace identified the presence of ransomware and data encryption linked to World Leaks within the network of an organization within the healthcare sector. Although Darktrace’s Autonomous Response capability was active in the customer’s environment and initially blocking suspicious connectivity, buying time for the customer to remediate, the attack continued once these mitigative actions expired. Darktrace continued to apply Autonomous Response actions as the attack progressed, working to inhibit the attackers at each stage of the intrusion.

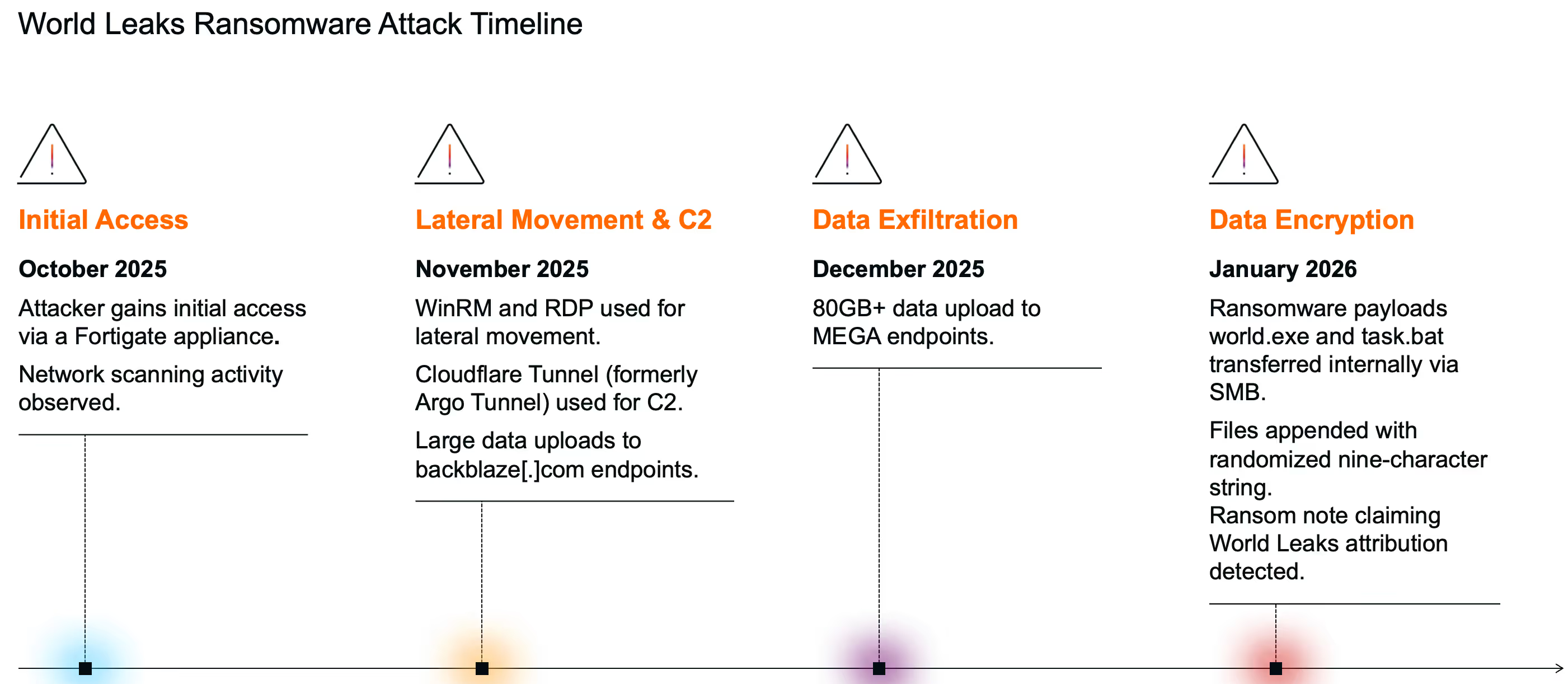

Investigations carried out by Darktrace revealed that threat actors likely gained initial access via a Fortigate appliance in mid-October, indicating a three-month dwell time, before employing living-off-the-land (LOTL) techniques for lateral movement. C2 communications were established using Cloudflare Tunnel (formerly Argo Tunnel). As part of the Actions on Objectives attack phase, a significant volume of data was exfiltrated to the MEGA cloud storage platform, followed by the encryption of customer data.

Initial access/ Lateral movement

Darktrace analysts identified the likely patient-zero device within the network as a Fortigate appliance. In October 2025, this device was seen conducting brute-force activity using the compromised ‘administrator’ credential to gain a foothold deeper within the customer’s environment. Masquerading as a privileged user, the threat actor then went on to launch activity on remote devices via PsExec, a common administrative tool that allows users to execute processes on remote systems without manually installing client software, providing significant power to attackers when abused. Around the time, Darktrace detected an unknown device on the network attempting to authenticate via NTLM. As this device had not previously been seen on the network, it likely belonged to the attacker.

Reconnaissance

As part of the reconnaissance phase of the attack, port and network scanning was carried out in an attempt to identify open UDP and TCP ports within the network.

Lateral movement & C2

Around one month after entering the customer’s network, the World Leaks threat actors began tunnelling activity using Cloudflare Tunnel. Darktrace detected connections to several hostnames including: region2.v2.argotunnel[.]com; h2.cftunnel[.]com; region1.v2.argotunnel[.]com. This tunnelling activity continued until January of 2026, when encryption occurred. Cloudflare tunnels are known to be abused by attackers as they enable the use of temporary infrastructure to scale operations, allowing rapid deployment and teardown. Furthermore, leveraging of Cloudflare’s infrastructure to create these rate-limited tunnels (used to relay traffic from an attacker-controlled server to a local machine) makes such malicious activity harder to detect by both defenders and traditional security measures, particularly those that rely on static blocklists [6].

Further lateral movement was carried out using common remote management tools such as Windows Remote Management (WinRM) RDP, allowing the World Leaks threat actors to access local devices within the victim organization’s network.

As this attack progressed, Darktrace detected multiple files being written over SMB. These files included Windows\Temp\chromeremotedesktophost.msi, which was written from the patient-zero device to another internal device as part of lateral movement efforts. Following this transfer, and prior to subsequent data exfiltration activity, a network server was observed connecting to the hostname remotedesktop-pa[.]googleapis[.]com, an API endpoint required for Chrome Remote Desktop, indicating that Chrome RDP was used by the threat actor in this stage of the attack.

Other files written over SMB included the script programdata\syc\OpenSSHUtils.psm1 (which can be used legitimately to configure OpenSSH) and the executable programdata\syc\ssh‑sk‑helper.exe (a legitimate OpenSSH component used to support security keys). These files were written from the suspected patient‑zero device to an internal domain controller using the ‘administrator’ credential.

Thereafter, SSH connections to external IP address 51.15.109[.]222 were observed, providing another channel between the malicious actors and victim machines. Darktrace recognized that the use of SSH by the devices seen connecting to this IP address was highly anomalous, indicating that this suspicious activity formed part of the attack.

Writes of the script programdata\syc\OpenSSHUtils.psm1 were also observed into January, highlighting the continuation of the attack that had begun three months earlier.

On December 19 and 20, Darktrace detected a DNS server within the customer’s network making anomalous outgoing connections to an external IP address not previously seen in the environment: 193.161.193[.]99. This IP address has been reported by open-source-intelligence (OSINT) as being associated with C2 infrastructure, having been linked to several remote access trojans (RATs) and botnets in the past.

This activity a shift towards the infrastructure-as-a-service (IaaS) model, underscoring the growing trend around As-a-Service Cybercrime models and the increasing the industrialization of botnets. The presence of extensive digital botnets, often leased to other criminal organizations, means the group gaining initial access is not necessarily the same group conducting ransomware deployment or data theft; botnets now act as shared underlying infrastructure enabling multiple forms of cybercriminal activity [7].

Furthermore, connections to this IP address (193.161.193[.]99) were made over port 1194, which is associated with OpenVPN, suggesting that World Leaks may have leveraged it to obfuscate C2 communication with attacker-controlled infrastructure.

![Darktrace’s detection of the IP address 193.161.193[.]99, noting that it was first seen within the customer’s network on December 19, 2025.](https://cdn.prod.website-files.com/626ff4d25aca2edf4325ff97/69b830390b1bcda7a148f5f9_Screenshot%202026-03-16%20at%209.30.46%E2%80%AFAM.avif)

Data exfiltration

In November, Darktrace detected the threat actors carrying out one of their Attack on Objective tactics: data exfiltration. Multiple local devices within the compromised network began transferring data to Backblaze and MEGA domains, both of which provide cloud storage services; 80+GB of data was transferred to MEGA in late December 2025. Endpoints associated with this activity included: backblazeb2[.]com and gfs302n520[.]userstorage[.]mega[.]co[.]nz, as well as related user agents such as AS40401 BACKBLAZE) and MegaClient/10.3.0/64.

Notably, Darktrace researchers identified two known World Leaks TTPs in this attack: the use of MEGA, a known tool abused by the group, and Rclone, a command-line tool used to manage files on cloud storage, which was observed in the user agent of the MEGA data-transfer connections: rclone/v1.69.0 [4].

![Cyber AI Analyst Incident highlighting data upload activity to backblaze[.]com endpoints.](https://cdn.prod.website-files.com/626ff4d25aca2edf4325ff97/69b830939a937990f1f80ad1_Screenshot%202026-03-16%20at%209.31.59%E2%80%AFAM.avif)

Ransomware deployment & encryption

The encryption stage of this attack was confirmed by the presence of a ransom note found on the network in a file with a seemingly randomized nine-character string preceding README.txt, attributing the incident to World Leaks, along with an extension with the same nine characters appended to encrypted files. Darktrace also observed SMB writes of files named world.exe and task.bat, with the compromised ‘localadmin’ credential used during the SMB logins. It is likely that these files served as the vector for the ransomware payload.

Conclusion

Though traditional ransomware relies on encryption, recent trends show that cyber threat actors no longer need to rely on noisy encryption tools and can eliminate much of the risk and technical complexity associated with encrypting systems. This is the model reportedly preferred by World Leaks after their rebrand from Hunters International.

In addition to reducing noise around these attacks, extortion‑only operations may be favored by threat actors over encryption‑focused ones for several reasons, including the fact that traditional security tools may struggle to detect data theft compared to encryption, that attackers leave less evidence behind when encryption is avoided, and that the long‑term impacts of stolen data on organizations can be greater than the loss of systems caused by encryption processes, which can be restored [8]. This is supported by analysis of data leak sites suggesting that almost 1,500 incidents in 2025 relied on data theft alone. Attackers can simply steal victim data and attempt to extort a ransom by threatening to publish it, without needing to deploy ransomware at all [9]. Furthermore, although World Leaks aims to function as an affiliate‑based EaaS operation, security teams should remain aware that their affiliates may have different criminal objectives.

Contrary to reports that World Leaks’ typical attack style has an extortion‑only objective, Darktrace detected an incident in which a World Leaks attack did end with the encryption of customer data. This highlights the need for adaptive defenses and reinforces the importance of network defenders staying proactive in the face of attacks, particularly as they may progress in ways that are unexpected compared to previous trends associated with a given threat actor.

Credit to Tiana Kelly (Senior Cyber Analyst and Analyst Manager) and Emily Megan Lim (Senior Cyber Analyst)

Edited by Ryan Traill (Content Manager)

Appendices

IoCs

- world.exe – Executable File – Possible Ransomware Payload

- task.bat – Script File – Possible Ransomware Payload

- ‘^[A-Z][a-z]{3}[A-Z][a-z][A-Z]{3}[.]README[.]txt' – Ransom Note

- [.]^[A-Z][a-z]{3}[A-Z][a-z][A-Z]{3} – Ransomware file extension

· 51.15.109[.]222 – IP Address - Possible C2 Infrastructure

· 193.161.193[.]99 – IP Address – Probable C2 Infrastructure

Darktrace Model Detections (Enhanced Monitoring models denoted with an asterisk)

· Device / Attack and Recon Tools

· Device / Suspicious SMB Scanning Activity

· Device / Anomalous NTLM Brute Force

· Compliance / Connection to Tunnelling Service

· Device / Suspicious New User Agents

· Device / New or Unusual Remote Command Execution

· Compliance / SMB Drive Write

· Anomalous Connection / Uncommon 1 GiB Outbound

· Compromise / Ransomware / Ransom or Offensive Words Written to SMB

· Device / Multiple Lateral Movement Model Alerts*

· Device / SMB Lateral Movement

· Unusual Activity / Sustained Anomalous SMB Activity

· Device / Large Number of Model Alerts

· Compromise / Ransomware / SMB Reads then Writes with Additional Extensions

· Compromise / Ransomware / Suspicious SMB Activity*

· Anomalous File / Internal / Additional Extension Appended to SMB File

· Unusual Activity / SMB Access Failures

· Unusual Activity / Enhanced Unusual External Data Transfer*

· Device / Suspicious File Writes to Multiple Hidden SMB Shares

· Anomalous Server Activity / Rare External from Server

· Unusual Activity / Unusual Mega Data Transfer*

· Device / Possible SMB/NTLM Brute Force

· Anomalous Connection / Unusual Admin RDP Session

· Anomalous Connection / Active Remote Desktop Tunnel

· Anomalous Connection / Data Sent to Rare Domain

· Anomalous Connection / New or Uncommon Service Control

· Anomalous Connection / New or Uncommon Service Enumeration

· Anomalous Connection / Rare WinRM Outgoing

· Anomalous Connection / SMB Enumeration

· Anomalous Connection / Unusual Admin RDP Session

· Anomalous Connection / Unusual Incoming Long Remote Desktop Session

· Anomalous Connection / Upload via Remote Desktop

· Anomalous File / Internal / Executable Uploaded to DC

· Anomalous File / Internal / Unusual SMB Script Write

· Compliance / SSH to Rare External Destination

· Device / Anomalous Github Download

· Device / Anonymous NTLM Logins

· Device / Network Scan

· Device / New or Uncommon WMI Activity

· Device / New User Agent To Internal Server

· Device / Possible Brute-Force Activity

· Device / RDP Scan

· Device / SMB Session Brute Force (Admin)

· Device / SMB Session Brute Force (Non-Admin)

· Device / Suspicious Network Scan Activity

· Unusual Activity / Successful Admin Brute-Force Activity

· Unusual Activity / Unusual External Data to New Endpoint

· Unusual Activity / Unusual External Data Transfer

· Unusual Activity / Unusual File Storage Data Transfer

· User / New Admin Credentials on Server

Cyber AI Analyst Incidents

· Scanning of Multiple Devices

· Large Volume of SMB Login Failures to Multiple Devices

· Suspicious Chain of Administrative Connections

· SMB Write of Suspicious File

· Suspicious DCE-RPC Activity

· Unusual External Data Transfer

· Unusual External Data Transfer to Multiple Related Endpoints

· Unusual External Data Transfer to Endpoints

MITRE ATT&CK Mapping

· Initial Access – T1190 – Exploit Public-Facing Application

· Defense Evasion, Initial Access, Persistence, Privilege Escalation – T1078 – Valid Accounts

· Resource Development – T1588.001 – Obtain Capabilities: Malware

· Reconnaissance – T1590.005 – Gather Victim Network Information: IP Addresses

· Reconnaissance – T1592.004 – Gather Victim Host Information: Client Configurations

· Reconnaissance – T1595.001 – Active Scanning: Scanning IP Blocks

· Reconnaissance – T1595.002 – Active Scanning: Vulnerability Scanning

· Reconnaissance – T1595.003 – Active Scanning: Wordlist Scanning

· Discovery – T1018 – Remote System Discovery

· Discovery – T1046 – Network Service Discovery

· Discovery – T1083 – File and Directory Discovery

· Discovery – T1135 – Network Share Discovery

· Command and Control – T1219 – Remote Access Tools

· Command and Control – T1219.002 – Remote Access Tools: Remote Desktop Software

· Command and Control – T1571 – Non-Standard Port

· Command and Control – T1572 – Protocol Tunneling

· Command and Control – T1573.001 – Encrypted Channel: Symmetric Cryptography

· Credential Access – T1110 – Brute Force

· Credential Access – T1110.001 – Brute Force: Password Guessing

· Defense Evasion – T1006 – Direct Volume Access

· Defense Evasion – T1564.005 – Hide Artifacts: Hidden File System

· Defense Evasion – T1564.012 – Hide Artifacts: File/Path Exclusions

· Execution – T1047 – Windows Management Instrumentation

· Execution – T1569.002 – System Services: Service Execution

· Lateral Movement – T1021 – Remote Services

· Lateral Movement – T1021.001 – Remote Services: Remote Desktop Protocol

· Lateral Movement – T1021.002 – Remote Services: SMB/Windows Admin Shares

· Lateral Movement – T1021.006 – Remote Services: Windows Remote Management

· Lateral Movement – T1080 – Taint Shared Content

· Lateral Movement – T1210 – Exploitation of Remote Services

· Lateral Movement – T1570 – Lateral Tool Transfer

· Collection – T1039 – Data from Network Shared Drive

· Collection – T1074 – Data Staged

· Exfiltration – T1041 – Exfiltration Over C2 Channel

· Exfiltration – T1048 – Exfiltration Over Alternative Protocol

· Exfiltration – T1567.002 – Exfiltration Over Web Service: Exfiltration to Cloud Storage

References

[1] https://www.levelblue.com/blogs/levelblue-blog/extortion-as-a-service-the-latest-threat-actor-criminal-ecosystem/

[2] https://blackpointcyber.com/wp-content/uploads/2025/12/World-Leaks.pdf

[3] https://blackpointcyber.com/threat-profile/world-leaks-ransomware/

[4] https://www.halcyon.ai/threat-group/worldleaks

[5] https://www.moxfive.com/resources/moxfive-threat-actor-spotlight-world-leaks

[6] https://thehackernews.com/2024/08/cybercriminals-abusing-cloudflare.html

[7] https://www.trendmicro.com/vinfo/tw/security/news/threat-landscape/the-industrialization-of-botnets-automation-and-scale-as-a-new-threat-infrastructure

[8] https://www.morphisec.com/blog/ransomware-without-encryption-why-pure-exfiltration-attacks-are-surging-and-why-theyre-so-hard-to-catch/

[9] https://sed-cms.broadcom.com/sites/default/files/2026-01/RWN-2026-WP100_1.pdf